Artificial intelligence can sometimes feel like a grand theatrical performance. Each output, each sentence, feels like a line in a script delivered with intention. Yet behind the scenes, there is no consciousness, no memory of being on stage, and no emotional anchor. What appears as a seamless thought is actually a delicate balancing act between mathematical patterns and probabilistic relationships. To understand how generative models maintain coherence, picture a storyteller who never saw the full story, yet must continue the tale convincingly based only on the last few spoken sentences.

This is where the idea of cognitive consistency in AI takes shape: a model’s ability to maintain a stable, meaningful flow of ideas even without true understanding. It is not knowledge in the human sense but a disciplined form of pattern continuation.

The Narrative Thread That Holds Thought Together

Human thinking is woven like fabric. We remember, infer, adjust tone, and maintain emotional continuity. Generative AI, however, works with tokens, probabilities, and context windows. Yet surprisingly, it can create paragraphs that sound intentional. How?

Imagine a tapestry where each thread represents a unit of meaning. The AI does not see the entire tapestry at once but focuses on its current position. It examines threads around it, calculates which thread best follows based on its training, and continues weaving. The result looks like a coherent picture, even though the AI is not aware of the entire design.

This process gives rise to coherence. The illusion of intention is born from statistical alignment. The model simply selects what most likely fits next, but because human language naturally follows patterns, this probability-guided weaving can feel remarkably intelligent.

The Invisible Glue: Probabilistic Structuring of Thought

When an AI generates text, it does not choose words randomly. Each step is grounded in patterns learned from massive datasets: how words relate, how themes evolve, and how tone is maintained. This underlying structure acts like invisible glue holding ideas in place.

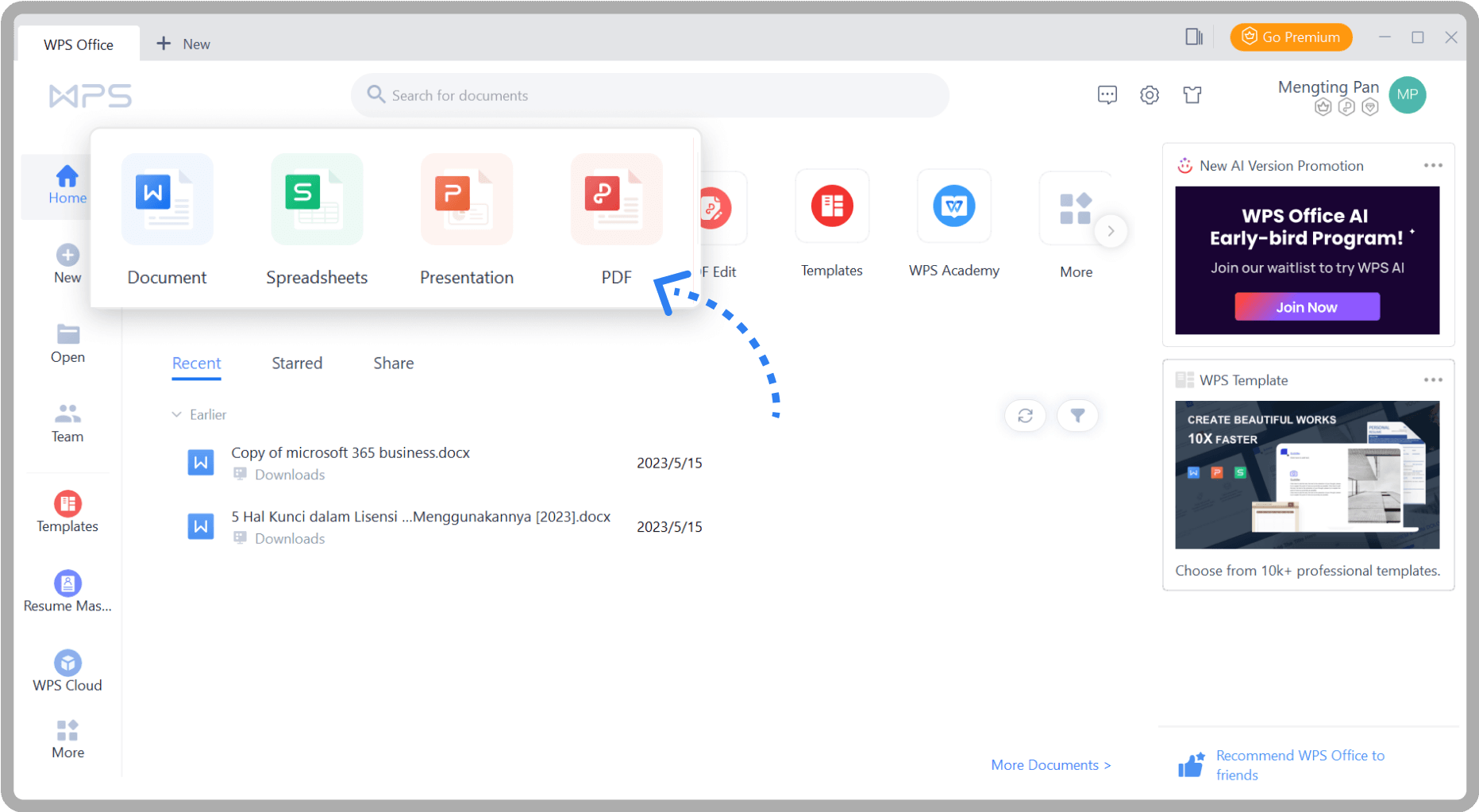

Learners attending a gen AI course in Hyderabad often encounter this phenomenon while working with transformer-based architectures. They observe how attention mechanisms help the model prioritize certain words over others, creating a sense of direction in language production.

This mechanism also helps maintain thematic identity. If a conversation begins about medieval architecture, the AI will stay in that thematic orbit unless actively guided elsewhere. Even without awareness, the system carries the emotional and conceptual tone because the statistical relationships between words and phrases guide its path.

Memory, Context, and the Art of Staying On Track

One of the most important techniques enabling cognitive consistency is the model’s ability to use context windows. The model does not remember previous conversations, but it has a temporary working memory within each interaction. This context acts like a spotlight, illuminating only the recent relevant text.

If the spotlight is too small, the AI might shift topics abruptly. If it is large enough, the conversation feels natural and steady. The better the spotlight, the smoother the flow.

Reinforcement training also shapes this consistency. Models are refined to avoid contradictions, follow instructions, and sustain logical flow. This refinement acts like a mentor quietly correcting errors in narrative direction.

When Consistency Cracks: The Limits of Coherence

Despite impressive performance, generative AI can falter. The illusion of understanding can break when the model:

- Encounters ambiguous prompts

- Runs into complex logical chains

- Must reference details beyond the context window

- Faces conflicting patterns in training data

In these moments, the AI may produce contradictions, hallucinations, or reasoning errors. The storyteller loses track of the plot. This is not a failure of intelligence but a reminder that these systems do not possess true conceptual grounding. They follow patterns but do not know them.

Designing Models That Think in Wholes

To strengthen consistency, researchers design architectures that encourage deeper pattern alignment, integrate memory buffers, and combine symbolic reasoning with neural inference. These hybrid models aim to bring AI closer to the experience of integrated thought.

Many training programs, such as a gen AI course in Hyderabad, emphasise not only model training but also prompt engineering and cognitive alignment strategies. Practitioners learn how to guide models effectively, ensuring that the AI’s responses remain relevant and stable across longer interactions.

This reflects a broader trend: shifting from raw generative capability to structured, reasoning-aware generative intelligence.

Conclusion

Cognitive consistency in AI is not the same as human coherence. Humans rely on lived experience, emotion, and intent. Generative models rely on patterns, probabilities, and context alignment. Yet the outcome can feel remarkably similar.

The storyteller has no awareness of the tale, yet the performance continues convincingly.

Understanding this subtle dance helps us build AI systems that communicate clearly, support creative and analytical tasks, and maintain meaningful interaction. The future lies not only in models that generate language, but in models that maintain the rhythm, tone, and integrity of thought itself.